What Are CPU, GPU, and Inference?

CPU (Central Processing Unit)

The general-purpose brain of a system. Designed for versatility, complex logic, and sequential decision-making. Strong at handling diverse workloads with low latency.

GPU (Graphics Processing Unit)

Originally built for graphics rendering, GPUs are optimized for massive parallel computation — performing the same operation across thousands of data points simultaneously.

Inference

Inference is the stage where a trained AI model makes predictions on new data. Unlike training (which is compute-heavy), inference is often continuous, latency-sensitive, and user-facing.

The interconnection:

During inference, models rely on hardware (CPU or GPU) to execute mathematical operations. The architecture determines how efficiently these operations scale whether prioritizing parallel throughput (GPU) or responsive decision logic (CPU).

So it was about what these things really mean but the real question is how to choose or why do CPU preferred more…

“Should we use CPUs or GPUs for inference?”

The answer depends on workload behavior, scale, and business priorities.

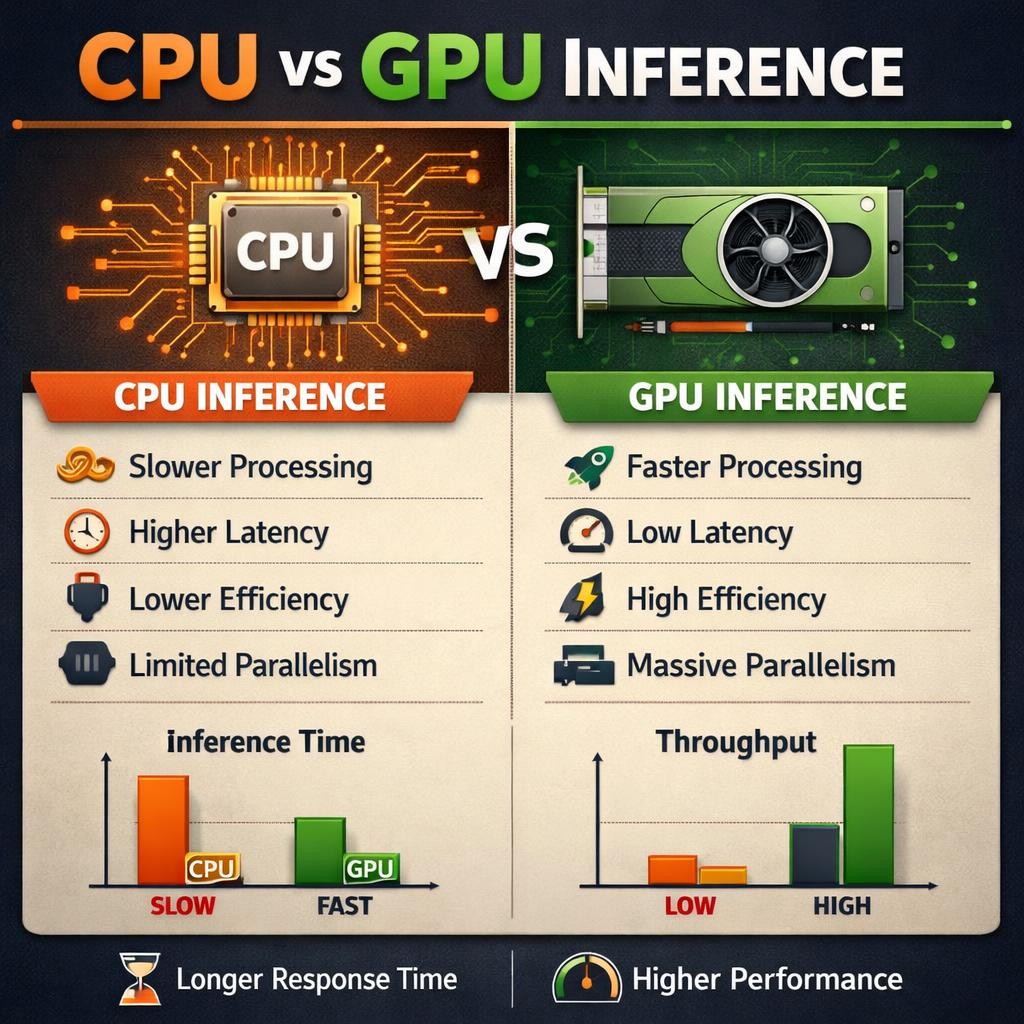

1. Throughput vs Latency: Start Here

- GPUs excel at high parallelism → ideal for high-throughput batch inference.

- CPUs offer strong per-core performance → often better for low-latency, real-time inference.

If milliseconds matter, CPUs often compete strongly.

If massive concurrent processing is required, GPUs dominate.

2. Utilization Matters More Than Peak Performance

A GPU delivering 10x peak performance sounds impressive.

But if:

- Workloads are bursty

- Batch sizes are small

- Accelerator utilization stays low

You may be paying for idle silicon.

In many enterprise deployments, optimized CPU inference delivers stronger cost-per-request efficiency.

3. Operational and Portability Considerations

CPU inference:

- Runs on existing infrastructure

- Easier deployment at edge

- Lower architectural specialization

GPU inference:

- Requires accelerator-aware optimization

- Higher infrastructure and power demands

- Deeper performance tuning effort

Operational complexity is often underestimated in early planning.

4. Total Cost of Ownership Is the Real Metric

Decision-makers evaluate …

- Hardware cost

- Power and cooling

- Optimization effort

- Scaling model

- Lifecycle flexibility

Peak benchmark numbers alone do not define business success.

Final Thought

CPU vs GPU is not a hardware debate.

It is a workload-characterization and business-alignment decision.

When benchmarking, workload tuning, and performance validation are done correctly, the right answer becomes data-driven not trend-driven!